The wicked problem of interoperability

Before I discuss interoperability in the building industry, I want you to think about a few scenarios from our not-too-distant past:

What if web developers had accepted perpetual ‘warfare’ between Microsoft and Netscape browsers in the mid-90’s as a cost of doing business? What if web standards were never taken seriously while vendors continued to prioritize their own proprietary browser features for competitive advantage?

Now hold that in the back of your mind. Let’s talk about the tools we use to make buildings….

A year ago, I published an article through the CASE blog focused on planning a building project for better interoperability. Poor interoperability within the building industry has resulted in measurable waste in the form of “rework, restarts, and data loss”. Worse still, building professionals are often put in a situation where solving problems with ‘best tool for the job’ comes at the cost of not being able to fully leverage data downstream without limitation. In the aftermath of the recently signed ‘interoperability agreement’ between Autodesk and Trimble, I thought it would be a good time to revisit and reflect on some of those ideas.

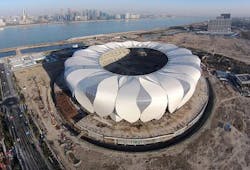

Interoperability has been a special interest of mine for a while now. When I was a designer at NBBJ, getting different design and analysis tools to exchange data and geometry was of high importance to delivering our work. In 2010, I was fortunate enough to have a paper published as part of the ACADIA conference titled “Makeshift: Experiments in Information Exchange and Collaborative Workflows”. The paper documented several of the workflow solutions being deployed on the Hangzhou stadium including the uses of centralized databases and API connections to facilitate data and geometry exchange between tools including Revit, Grasshopper, and AutoCAD. At the time of the project, there were no special plugins or well defined workflows available that met the project needs. Many of the tools had to be developed from scratch and were specific to the unique design criteria of the project.

Shortly after the Hangzhou design effort came to a close, I released my first open source plugin called Slingshot which allowed Grasshopper to connect directly to relational databases. When I joined CASE, one of my pet projects was to expand on this idea and develop a new CASE Exchange application that was tied to our interoperability service for project planning and execution. Soon after, I released Rhynamo as an open source project that allows Revit with Dynamo to read Rhino files using OpenNURBS. We have also seen firms like Thornton Tomasetti investing in their own interoperability platform called TTX and products like Flux are coming into the marketplace touting ‘Your Design Tools, Working Together.’

Yet for all the tools available which help to break down the walled gardens of building applications, interoperability still remains a wicked problem in the building industry. The problem of interoperability cuts deep into interdependent issues of the marketplace of tools and our execution processes.

The Dominance of Proprietary Tools

Stakeholders in the building industry produce output almost entirely through proprietary products, some of which seem to dominate the marketplace. For example, Revit’s market presence has arguably set a kind of ‘de facto’ standard for many in the industry. While Revit provides a rather substantial API and is widely used, it is nevertheless a ‘closed’ product. Access to the data and the availability of the API is provided at the discretion of the vendor and major decisions happen behind closed doors. Most vendors share this approach with their products leaving industry professionals to concoct limited or ‘one off’ solutions that dance across a multitude of software APIs and file formats, each one different from the other.

Amidst our proprietary tools, we have seen the introduction of standard specifications. IFC (Industry Foundation Classes) and COBie are two examples of ‘neutral’ data formats designed to facilitate interoperability in the building industry. Yet all too often support for these formats is positioned as a limited compatibility feature within a product rather than an integral data format at the foundation of our tools. This has led us to…

The Rise of Middleware

As we continue to find solutions to satisfy our immediate production needs, we find ourselves operating in a paradigm driven by middleware. File formats, API connections, and even new products have been devised to create limited layers of compatibility among different tools. These may partially satisfy our near-term needs, but we must also be critical of accepting this as a long-term solution.

These tools ultimately have to be designed around the nuances and idiosyncrasies of the products they support. The reworking of a proprietary API, a changed feature, or other product decisions can create a moving target for interoperability tools and introduce unforeseen breaking changes to the workflow. Furthermore, any encumbrances inherent to the proprietary products can translate to a larger network effect of data issues… as the old saying goes, your team can only be as good as its weakest player.

While middleware solutions can arguably provide a more streamlined workflows in the near-term, they also:

- Introduce their own special kind of data management and maintenance to be layered into the production process.

- Have to play within the limitations of the proprietary products they support.

- Are often designed for a limited scope within the building design and construction lifecycle.

This is far from the perfect ‘interoperability’ end state and is perhaps best thought of as a stepping stone towards something better…

The Future – Interoperable by Design?

I can’t help but wonder: Instead of creating variations on tools that navigated our existing world of closed proprietary software, what if the building design and construction tools we used today were interoperable by design?

Certainly the efforts of organizations like buildingSmart have started this conversation with data standards like IFC. However if the dialog is going to yield the impact it needs to have, it will require a larger movement and conversation involving industry professionals, vendors, and academics. And perhaps most importantly: customers need to demand it and not be content with business as usual.

Like the rise of web standards that put the ‘browser wars’ in check, I see this conversation as a critical evolution to realize the full potential for a truly data-driven building industry.

Some thoughts on what an interoperable future might look like:

- Open, public standards become adopted as the basis for product design and functionality.

- Creators of AEC tools compete on the basis of quality of implementation and compliance.

- Interoperability exists more as an ‘invisible state’ (not a special compatibility feature or an additional middleware product)

- Common protocols among tools (and APIs) allow industry stakeholders to benefit from a multitude of connections into the building datastream at all stages.

- Transparent evolution of data-driven processes in the building industry.

Click here for the original Proving Ground post.

About the Author

Nathan Miller

Nathan Miller is the Founder of Proving Ground (https://provingground.io/), an AEC consulting practice that creates data-driven building processes that increase productivity, provide certainty, and enhance the human experience. As strategic advisers, the firm works with business leaders to define strategic plans focused on innovation with data. As educators, they teach your staff new skills by facilitating classroom-style workshops in advanced technology. As project consultants, they work with teams to develop custom tools and implement streamlined workflows.