In the rush to slash energy consumption in power-hungry data centers, design teams, equipment manufacturers, and tech companies have been developing clever, low-energy cooling solutions—from Facebook’s open-rack server setup with exposed motherboards, to Skanska’s eOPTI-TRAX liquid refrigerant coil system, to Google’s evaporative cooling schemes.

Solutions like these have helped data center facility operators achieve unprecedented energy performance levels, with power utilization effectiveness (PUE) ratios dipping below 1.10 in some instances. This means that less than 10% of the total energy consumption in a facility is attributed to noncomputing functions, such as air-conditioning and lighting.

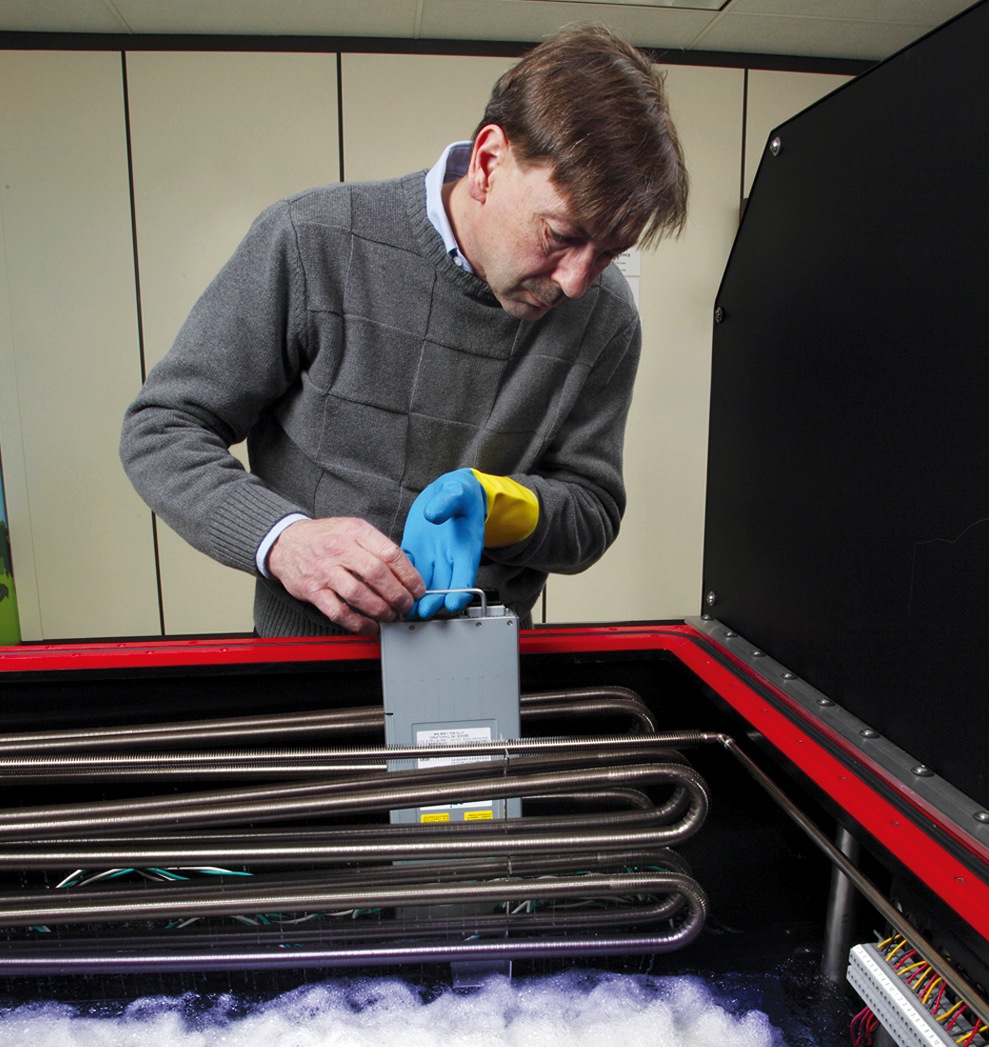

Now, a new technology promises to push the limits of data center energy efficiency even further. Called immersive cooling, it uses a liquid instead of air to cool the servers. LiquidCool Solutions, Green Revolution, and 3M are among the pioneers of this technology. Each system works a bit differently, but the basic idea is to submerge the motherboards in tanks filled with nonconductive fluid, which absorbs the heat generated by the processors.

LiquidCool Solutions, for example, uses an enclosed server module and pumps dielectric fluid through the server enclosure. Green Revolution uses a tub full of dielectric oil and circulates the liquid through the tubs. 3M also uses the tub approach, but the fluid boils and then is condensed to reject the heat.

By using liquid-based cooling at the server level, the need for air-conditioning is greatly reduced, or even eliminated in some climates. The same goes for traditional HVAC equipment and systems—chillers, fan units, raised floors, and so on.

The Allied Control 500 kW immersion-cooled data center in Hong Kong is capable of delivering a PUE of just 1.02. The standard, 19-inch server racks use 3M Novec Engineered Fluids to enable tight component packaging for greater computing power in less space, according to Allied. Its open-bath design permits easy access to hardware and eliminates the need for pressure vessel enclosures and charging/recovery systems. PHOTO: COURTESY ALLIED CONTROL

“In most parts of the world, compressorized cooling would not be required with immersive cooling, since the liquid temperatures can be at a level where direct heat rejection using outdoor condensers or cooling towers would be sufficient,” says Thomas Squillo, PE, LEED AP, Vice President with Environmental Systems Design, who is currently researching the technology for the firm’s data center clients. “The fan energy is also eliminated, both in the HVAC system and in the server itself. Fluid pumping energy is very low.”

Other advantages of the cooling technology, according to Squillo:

• Increased performance and service life of the computer chips by eliminating heat buildup and problems related to contaminated air and dust.

• Ability to deploy data centers in extremely harsh environments without greatly impacting energy performance.

• Potential construction cost savings by downsizing or eliminating traditional HVAC systems.

Other than a few pilot projects, including a Bitcoin mining data center in Hong Kong and a Lawrence Berkeley National Laboratory-led installation in Chippewa Falls, Wis., immersive cooling technology is largely untested. A year into the Bitcoin pilot, the data center operator reported a 95% reduction in cooling costs.

AEC professionals are starting to realize the potential for immersive cooling, especially for high-performance computing centers and consolidated, high-density data centers.

“Large data centers that have many homogenous machines at high density—like those operated by Internet and cloud providers—are a good application,” says Squillo. “Small footprint and minimal energy use are very important due to the volume of servers. These can be deployed in remote areas where space and energy are cheap, but where air quality may be a concern, without having to worry about the data center air.”

TRICKY DESIGN CONSIDERATIONS

Before the technology can be implemented, says Squillo, several nettlesome design factors specific to immersive cooling have to be addressed:

• Piping distribution to the racks and cooling units requires redundancy and valving to accommodate equipment maintenance without disrupting server performance.

• Additional equipment and space are needed to drain fluid from the tanks for server maintenance.

• Local code requirements may limit the amount of fluid that can be stored in a single room.

• For the foreseeable future, it’s unlikely that a large data center would be 100% liquid-immersion cooled. This means provisions will have to be made for both air- and liquid-cooling systems, which will require additional space in the data hall and mechanical room.

“I think that some form of this technology will definitely be the direction the data center market will take in the future,” says Squillo. “The market just needs to mature enough for owners to trust the technology and demand servers that are designed for a particular type of liquid cooling. In the short term, I see large companies and server manufacturers doing small-scale installations to test the concept, before wanting to implement it at a large scale.”

Related Stories

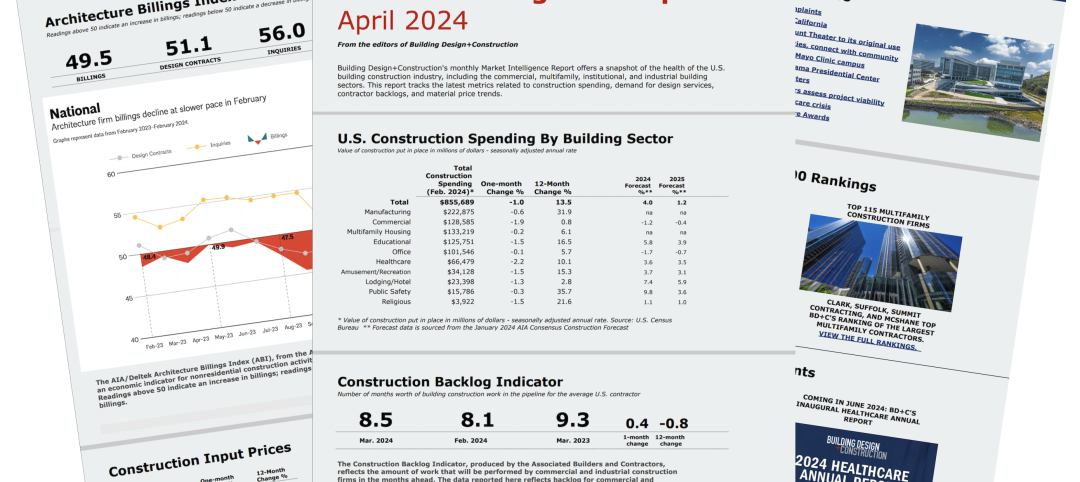

Construction Costs | Apr 18, 2024

New download: BD+C's April 2024 Market Intelligence Report

Building Design+Construction's monthly Market Intelligence Report offers a snapshot of the health of the U.S. building construction industry, including the commercial, multifamily, institutional, and industrial building sectors. This report tracks the latest metrics related to construction spending, demand for design services, contractor backlogs, and material price trends.

MFPRO+ New Projects | Apr 16, 2024

Marvel-designed Gowanus Green will offer 955 affordable rental units in Brooklyn

The community consists of approximately 955 units of 100% affordable housing, 28,000 sf of neighborhood service retail and community space, a site for a new public school, and a new 1.5-acre public park.

Construction Costs | Apr 16, 2024

How the new prevailing wage calculation will impact construction labor costs

Looking ahead to 2024 and beyond, two pivotal changes in federal construction labor dynamics are likely to exacerbate increasing construction labor costs, according to Gordian's Samuel Giffin.

Healthcare Facilities | Apr 16, 2024

Mexico’s ‘premier private academic health center’ under design

The design and construction contract for what is envisioned to be “the premier private academic health center in Mexico and Latin America” was recently awarded to The Beck Group. The TecSalud Health Sciences Campus will be located at Tec De Monterrey’s flagship healthcare facility, Zambrano Hellion Hospital, in Monterrey, Mexico.

Market Data | Apr 16, 2024

The average U.S. contractor has 8.2 months worth of construction work in the pipeline, as of March 2024

Associated Builders and Contractors reported today that its Construction Backlog Indicator increased to 8.2 months in March from 8.1 months in February, according to an ABC member survey conducted March 20 to April 3. The reading is down 0.5 months from March 2023.

Laboratories | Apr 15, 2024

HGA unveils plans to transform an abandoned rock quarry into a new research and innovation campus

In the coastal town of Manchester-by-the-Sea, Mass., an abandoned rock quarry will be transformed into a new research and innovation campus designed by HGA. The campus will reuse and upcycle the granite left onsite. The project for Cell Signaling Technology (CST), a life sciences technology company, will turn an environmentally depleted site into a net-zero laboratory campus, with building electrification and onsite renewables.

Codes and Standards | Apr 12, 2024

ICC eliminates building electrification provisions from 2024 update

The International Code Council stripped out provisions from the 2024 update to the International Energy Conservation Code (IECC) that would have included beefed up circuitry for hooking up electric appliances and car chargers.

Urban Planning | Apr 12, 2024

Popular Denver e-bike voucher program aids carbon reduction goals

Denver’s e-bike voucher program that helps citizens pay for e-bikes, a component of the city’s carbon reduction plan, has proven extremely popular with residents. Earlier this year, Denver’s effort to get residents to swap some motor vehicle trips for bike trips ran out of vouchers in less than 10 minutes after the program opened to online applications.

Laboratories | Apr 12, 2024

Life science construction completions will peak this year, then drop off substantially

There will be a record amount of construction completions in the U.S. life science market in 2024, followed by a dramatic drop in 2025, according to CBRE. In 2024, 21.3 million sf of life science space will be completed in the 13 largest U.S. markets. That’s up from 13.9 million sf last year and 5.6 million sf in 2022.

Multifamily Housing | Apr 12, 2024

Habitat starts leasing Cassidy on Canal, a new luxury rental high-rise in Chicago

New 33-story Class A rental tower, designed by SCB, will offer 343 rental units.